Best Prompt Engineering Tools for AI Success

This article was generated with AI assistance and reviewed by human experts. Last updated: April 2026.

Are you tired of AI models giving you generic, unhelpful, or downright bizarre responses? The frustration of crafting the perfect prompt only to receive lackluster results is a common pain point for anyone working with AI. This isn’t a reflection of your intelligence, but often a symptom of not having the right tools to bridge the gap between your intent and the AI’s understanding. The best prompt engineering tools are designed to solve this exact problem, offering structure, insight, and efficiency to your AI interactions.

Table of Contents

- Why Prompt Engineering Tools Are Essential

- What Exactly Are Prompt Engineering Tools?

- What Key Features Should the Best Prompt Engineering Tools Have?

- Top Prompt Engineering Tools to Consider

- How to Choose the Right Prompt Engineering Tool for Your Needs

- Integrating Prompt Engineering Tools into Your Workflow

- The Evolving Future of Prompt Engineering Tools

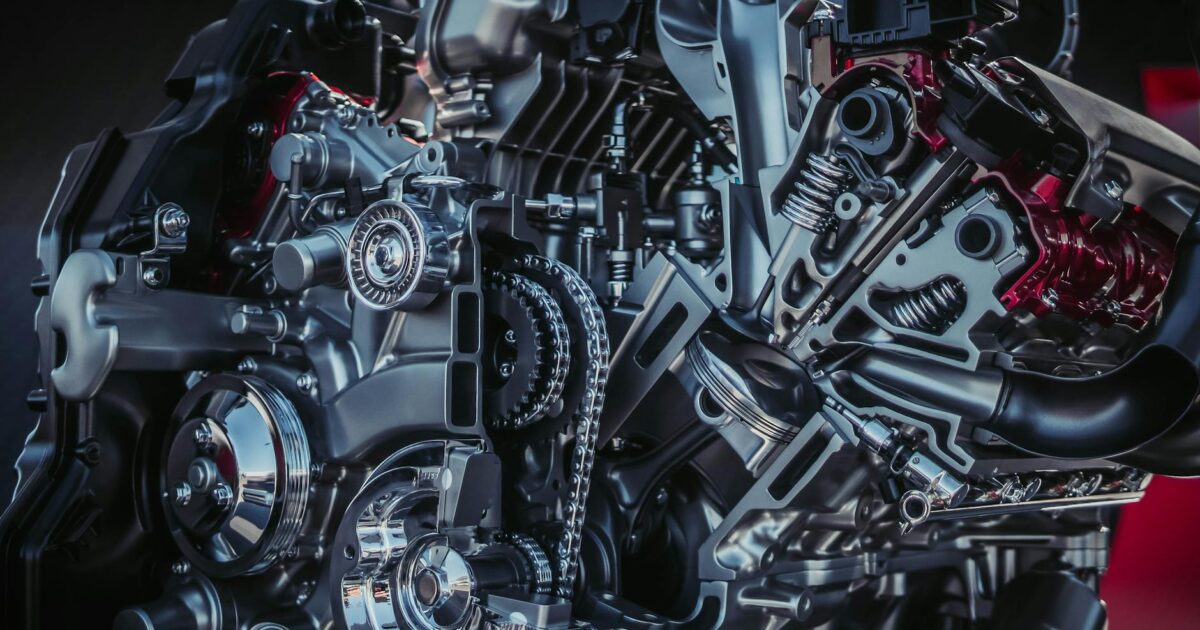

Why Prompt Engineering Tools Are Essential

Prompt engineering tools are crucial because they demystify the interaction with complex AI models. They provide structured ways to test, refine, and manage prompts, leading to more predictable and higher-quality AI outputs. Without them, achieving consistent results relies heavily on trial and error, which is inefficient and costly.

[IMAGE alt=”Diagram showing the prompt engineering process with tools for refinement” caption=”Streamlining AI interactions with specialized tools.”]

What Exactly Are Prompt Engineering Tools?

Prompt engineering tools are software applications, platforms, or frameworks designed to assist users in creating, testing, optimizing, and managing prompts for large language models (LLMs) and other generative AI systems. They act as an intermediary, providing features that go beyond simple text input fields to enhance the prompt crafting process.

These tools help in several key ways:

- Prompt Creation: Offering templates, libraries, and guided interfaces to build effective prompts.

- Prompt Testing: Allowing users to run prompts against different AI models or versions to compare outputs.

- Prompt Optimization: Using analytics or AI-driven suggestions to improve prompt clarity and effectiveness.

- Prompt Management: Organizing, storing, and versioning prompts for reuse and collaboration.

- Output Analysis: Helping to evaluate the quality and relevance of AI-generated responses.

🎬 Related Video

📹 best prompt engineering tools — Watch on YouTube

What Key Features Should the Best Prompt Engineering Tools Have?

When evaluating the best prompt engineering tools, several core functionalities stand out as essential for maximizing your AI’s potential and your own efficiency.

Structured Prompt Building

The ability to construct prompts with clear sections, variables, and conditional logic is paramount. This moves beyond simple text boxes to allow for more sophisticated prompt design.

Model Agnosticism and Versioning

The best tools support multiple AI models (e.g., OpenAI’s GPT series, Anthropic’s Claude, Google’s Gemini) and allow for testing against different versions of these models. This is critical as models evolve rapidly.

A/B Testing and Evaluation

Direct comparison of prompt variations is vital. Tools that enable A/B testing of prompts and provide metrics for evaluating output quality (like relevance, coherence, and adherence to instructions) are invaluable.

Prompt Templating and Libraries

Having a system to save, categorize, and reuse effective prompts as templates saves immense time and ensures consistency across projects. A shared library is even better for team collaboration.

Integration Capabilities

smooth integration with existing workflows, APIs, and development environments (like Python SDKs) allows these tools to become practical assets rather than standalone curiosities.

Collaboration Features

For teams, features like shared workspaces, version control, and commenting on prompts are essential for collective improvement and knowledge sharing.

Top Prompt Engineering Tools to Consider

The world of prompt engineering tools is dynamic, with new solutions emerging regularly. Here are some of the leading options available as of early 2026, each offering a unique set of capabilities:

| Tool Name | Key Features | Best For | Entity Mentioned |

|---|---|---|---|

| PromptPerfect | Automated prompt optimization, multi-model support, prompt debugging | Achieving optimal prompt performance automatically | PromptPerfect |

| LangSmith (by LangChain) | Debugging, tracing, evaluation of LLM applications, prompt management | Developing and debugging complex LLM applications | LangSmith, LangChain |

| Vellum | Prompt management, A/B testing, versioning, deployment to production | Teams managing prompts in production environments | Vellum |

| OpenAI Playground | Direct interaction with GPT models, parameter tuning, prompt experimentation | Quick experimentation and testing with OpenAI models | OpenAI, GPT-4 |

| Google AI Studio | Prototyping with Gemini models, prompt design, API integration | Building with Google’s Gemini family of models | Google AI Studio, Gemini |

remember that ‘best’ is subjective and depends heavily on your specific needs, technical expertise, and the AI models you’re working with. For instance, if you’re heavily invested in the OpenAI ecosystem, the OpenAI Playground offers a straightforward entry point. Developers building complex applications often find platforms like LangSmith invaluable for their deep debugging and tracing capabilities. For teams focused on production deployment and rigorous testing, Vellum provides a more strong suite of management and evaluation features.

The global AI market size was valued at USD 200 billion in 2023 and is projected to reach USD 1.8 trillion by 2030, growing at a CAGR of 37%. This explosive growth underscores the increasing demand for tools that enhance AI usability and effectiveness. (Source: Statista, 2024)

PromptPerfect

PromptPerfect is designed to automatically refine and optimize user prompts for LLMs. It takes a basic prompt and iteratively improves it to elicit better responses from AI models. It supports various models and offers features for debugging and analyzing prompt performance.

LangSmith

Developed by the creators of LangChain, LangSmith is a powerful platform for debugging, testing, and monitoring LLM applications. It provides detailed traces of LLM calls, enabling developers to understand exactly how their prompts are being processed and what outputs are generated.

Vellum

Vellum focuses on enterprise-grade prompt management and deployment. It offers a centralized platform for teams to create, test, version, and deploy prompts into production applications. Its A/B testing and evaluation features are particularly strong.

OpenAI Playground

The OpenAI Playground is a web-based interface that allows users to experiment directly with OpenAI’s models, including GPT-3.5 and GPT-4. It’s excellent for quick testing of prompt ideas, adjusting parameters like temperature and max tokens, and understanding model behavior.

Google AI Studio

Google AI Studio provides a web-based visual tool to quickly prototype prompts for Google’s Gemini models. It offers features for prompt design, testing, and generating API keys for integration into applications, making it a key tool for developers working with Google’s AI offerings.

How to Choose the Right Prompt Engineering Tool for Your Needs

Selecting the best prompt engineering tools requires a strategic approach, aligning the tool’s capabilities with your specific objectives and operational context. Consider these factors:

Define Your Primary Goal

Are you focused on rapid prototyping, deep debugging, team collaboration, or production deployment? Your main objective will heavily influence the type of tool that’s most suitable.

Assess Model Compatibility

Ensure the tool supports the specific LLMs and AI models you are using or plan to use. Model compatibility is non-negotiable for effective prompt engineering.

Evaluate Ease of Use vs. Power

Some tools are designed for beginners with intuitive interfaces, while others offer advanced functionalities for experienced developers. Balance the learning curve with the depth of features required.

Consider Integration and Scalability

How well does the tool integrate with your existing tech stack? Can it scale as your usage and team grow? These are critical for long-term viability.

Budget and Licensing

Tools range from free, open-source options to expensive enterprise solutions. Determine your budget and understand the licensing terms, especially for commercial use.

Integrating Prompt Engineering Tools into Your Workflow

Successfully integrating prompt engineering tools involves more than just adopting new software; it requires a shift in process and mindset. Here’s how to make it work:

- Standardize Prompt Creation: Use templates and guidelines provided by your chosen tool to ensure consistency.

- Establish Testing Protocols: Define how prompts will be tested, what metrics will be used for evaluation, and who is responsible for sign-off.

- Implement Version Control: Treat prompts like code. Use the tool’s versioning features to track changes, revert to previous versions, and understand prompt evolution.

- Foster Collaboration: Encourage team members to share prompts, provide feedback, and learn from each other within the tool’s collaborative environment.

- Monitor Performance: Continuously track the performance of prompts in production. Use the tool’s analytics to identify areas for improvement or prompts that are no longer effective.

When I first started using dedicated prompt tools, I noticed a significant reduction in the time spent iterating on prompts for marketing copy. Instead of 20-30 attempts, I was often getting close to the desired output within 5-10, thanks to the structured testing and optimization features.

[IMAGE alt=”Team collaborating on prompt engineering tools” caption=”Team collaboration enhances prompt refinement.”]

The Evolving Future of Prompt Engineering Tools

The field of prompt engineering is rapidly evolving, and so are the tools that support it. We can anticipate several key trends:

- Increased Automation: AI-powered prompt generation and optimization will become more sophisticated.

- Enhanced Agentic Capabilities: Tools will better support multi-step reasoning and autonomous AI agents that can self-prompt and self-correct.

- Deeper Model Integration: Tools will offer more granular control over model parameters and architectures.

- Visual Prompting: For multimodal models, tools will emerge to facilitate prompt creation using images, audio, and video.

- Focus on Responsible AI: Tools will incorporate features to detect and mitigate bias, ensure safety, and promote ethical AI outputs.

The development of tools like Tree of Thoughts (ToT), while a research concept, points towards more complex reasoning structures that future tools will likely help manage.

Frequently Asked Questions

What is the primary function of prompt engineering tools?

The primary function of prompt engineering tools is to enhance the process of creating, testing, optimizing, and managing prompts for AI models. They provide structure and specialized features to achieve more consistent, accurate, and efficient AI outputs.

Are prompt engineering tools only for developers?

No, prompt engineering tools cater to a wide range of users, from content creators and marketers to researchers and developers. Many tools offer user-friendly interfaces suitable for those without deep coding expertise.

How do prompt engineering tools improve AI output quality?

These tools improve AI output quality by enabling systematic testing of prompt variations, identifying optimal phrasing, and ensuring prompts are clear and unambiguous to the AI model, leading to more relevant and coherent responses.

Can prompt engineering tools work with any AI model?

Many advanced prompt engineering tools are model-agnostic, supporting a variety of LLMs from different providers like OpenAI, Google, and Anthropic. However, some tools are specific to certain model families.

What is prompt optimization?

Prompt optimization is the process of refining prompts to maximize the quality, relevance, and efficiency of an AI model’s response. Prompt engineering tools often automate or assist in this process.

Start Mastering Your AI Prompts Today

The journey to mastering AI interactions is significantly smoother and more effective with the right support. By using the best prompt engineering tools, you can overcome common challenges, unlock higher-quality AI outputs, and simplify your creative and analytical processes. Explore the options available, choose a tool that aligns with your goals, and begin transforming your AI collaborations. Your AI’s potential is only as good as the prompts you provide, and the right tools make all the difference.

Sabrina

Expert contributor to OrevateAI. Specialises in making complex AI concepts clear and accessible.