Cross Entropy Loss LLM Explained

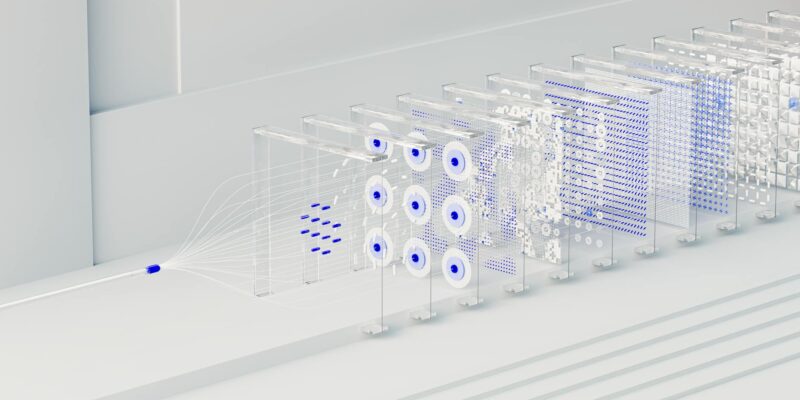

Cross entropy loss LLM is a fundamental concept for training large language models. It measures how well your model’s predicted probabilities match the actual outcomes, guiding the learning process to produce more accurate and relevant text.

Read Article →